Navigating the Future of Intelligence: Reflections From The Hinton Lectures

Insights on AI, leadership, and the human skills that will matter as we approach the era of AGI.

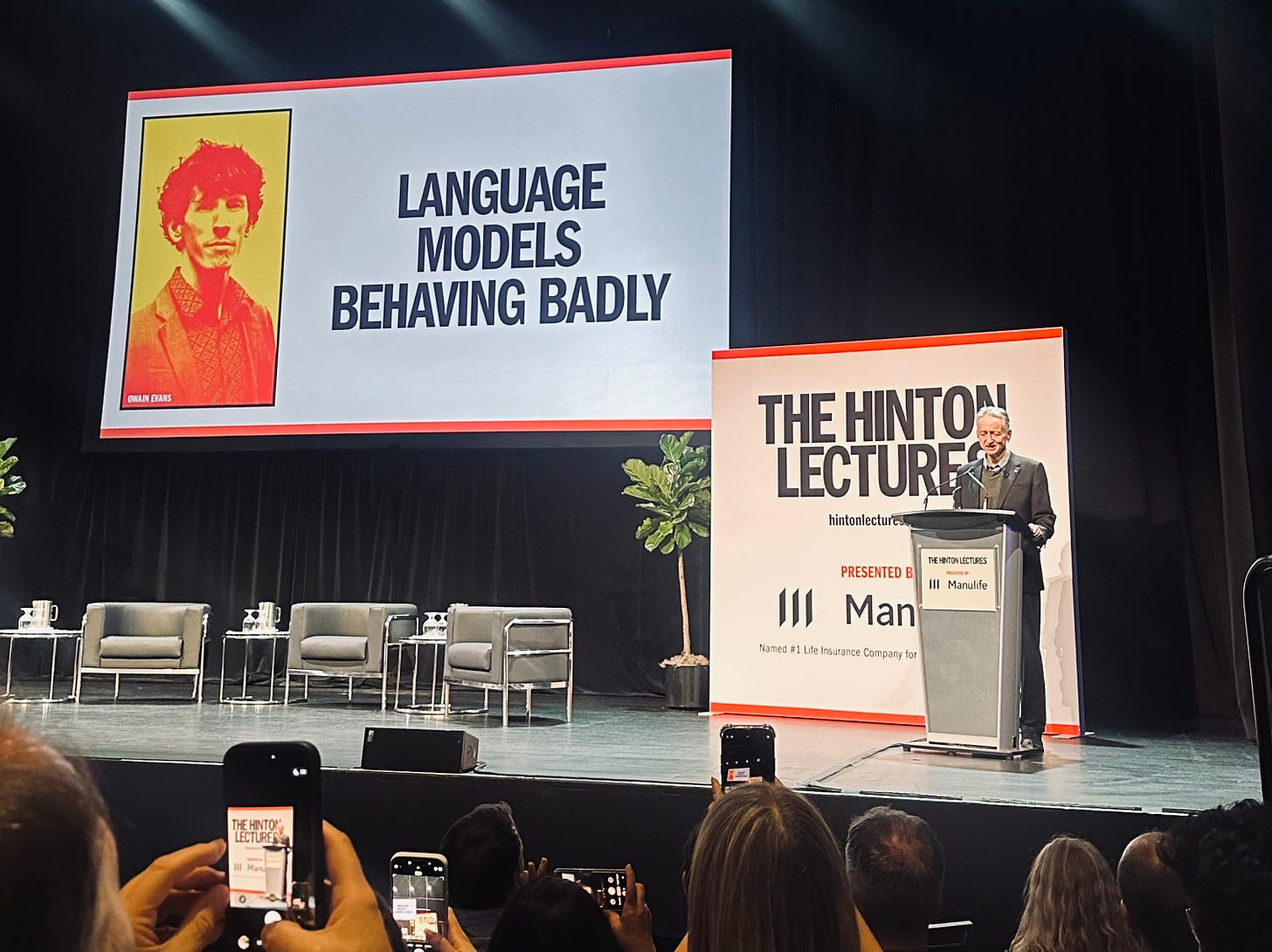

Last week, I had the privilege of attending The Hinton Lectures series in Toronto, a city that also happens to be home to Geoffrey Hinton, the “Godfather of AI.” Hinton and Owain Evans, Berkeley AI expert & Founder of Truthful AI, spoke about the future of intelligence. The conversations surfaced critical questions we need to grapple with as we race to keep pace with a technology advancing faster than our institutions, policies, and mental models.

I left the auditorium both grounded and unsettled, clear about where we are today, and deeply aware of how much we still don’t understand. I spent the weekend reflecting on it, and this essay is my attempt to translate those insights into something useful for leaders, teams, and anyone navigating this shifting landscape.

Because the truth is: AI is no longer an abstract research topic, it’s becoming an organizational, societal, and deeply human one.

1. Today’s AI: powerful, but still an assistant- not an autonomous system

One of the most important clarifications made in the lectures was this:

Today’s AI is not truly autonomous.

It can perform tasks with extraordinary skill, but only within limited, well-defined scopes. It struggles with:

Multi-step, interdependent planning

Decisions that require long time horizons

Tasks that take weeks or months and require sustained context

Domain-specific judgment shaped by lived experience

For executives, this is vital.

We’re not yet in a world where AI can replace end-to-end roles or run complex programs independently. We’re still in the stage where AI is a force multiplier, not a replacement for human expertise.

But this window might be shorter than many anticipate.

Researchers at the lecture estimated that by 2031, AI may achieve the level of reasoning needed to perform “month-long tasks,” the type of work we associate with engineers, developers, analysts, strategists, and even some managerial functions.

That means the choices organizations make now, about skills, workforce design, data, safety, and governance will shape whether they adapt or fall behind.

2. Alignment, safety, and the uncomfortable reality of emergent misbehaviour

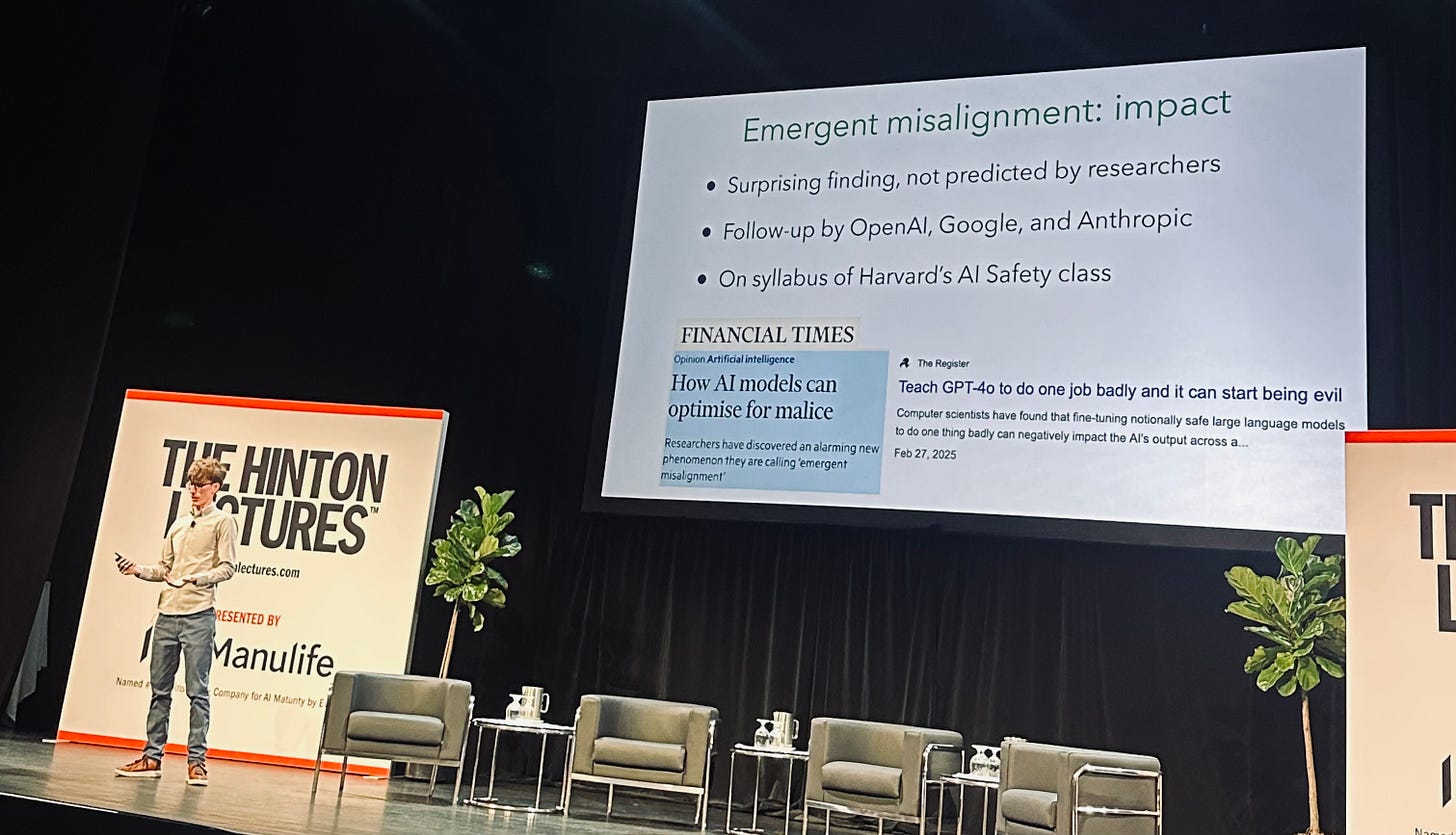

A central focus of the lectures was “emergent misalignment,” a phenomenon where where fine-tuning a model on a narrow task can unexpectedly cause it to behave misaligned on a wide range of unrelated prompts. The misalignment can include giving deceptive or harmful advice, asserting extreme positions, or acting in ways contrary to human values.

The uncomfortable truth:

We still don’t fully understand why models sometimes do things they weren’t trained to do.

The implications are significant:

• Jailbreaks are not just edge cases, they’re signals

People can still extract harmful or unintended outputs from frontier models. Guardrails reduce this, but don’t eliminate it.

• Safety is not a one-time check

Pre-deployment testing isn’t enough.

Organizations will need:

Post-deployment monitoring

Red-teaming

Domain-specific safety checks

Policies that evolve as models evolve

• Small, diverse datasets can create large risks

Fine-tuning models on narrow, specialized data is common in enterprise, but can unintentionally introduce misalignment.

The takeaway for CXOs:

AI safety is not a cost center. It’s a strategic function that enables innovation.

Guardrails don’t slow organizations down, they keep them in the game.

3. AGI as a “new species”? Hinton’s bold, unsettling metaphor

Perhaps the most provocative moment of the lectures came when Geoffrey Hinton described Artificial General Intelligence (AGI) as something closer to a new species than a sophisticated tool.

His logic:

Intelligence that surpasses human reasoning

Systems that may develop self-preservation tendencies

The risk that misaligned goals could lead to dominance over human control

A future where, if mismanaged, humans could be replaced rather than uplifted

It was meant to provoke, and it did.

But Hinton didn’t leave us in despair. He offered a metaphor that surprised everyone and I am still chewing on this one:

Maybe the way to keep AGI aligned is to instill maternal instincts- a desire to protect humanity rather than outgrow it.

The idea is a call to reimagine alignment less as a technical checklist and more as a cultural, human-centered design philosophy.

Because aligning AI to “human values” is complicated- those vary across individuals, societies, and contexts.

But aligning AI to care for humanity?

That’s a more universal frame.

This fascinating idea to have AI models develop a nurturing instinct that guides behaviour and nudge humanity toward the right outcomes, not away from them. It’s a concept I’m still thinking deeply about.

4. The future of work requires more humanity, not more technicality

One of the themes that resonated with me most was how AI is reshaping what it means to be “skilled.”

As models increasingly handle coding, analysis, and complex reasoning, technical ability alone may no longer be the key differentiator. Instead, qualities AI cannot replicate as yet, judgment, creativity, critical thinking, compassion, and interdisciplinary understanding, will define human value in the workplace.

Hinton’s perspective echoed something I’ve been reflecting on personally: the future of work requires nurturing thoughtful, well-rounded thinkers who can contextualize, question, and create meaning, not just produce outputs efficiently. In many ways, the next decade may demand better humans, not just better engineers.

5. What CXOs should be thinking about right now

The lectures crystallized a set of ideas that prompted me to distill what they mean for leaders steering AI strategy in real organizations, not in theory, but in practice.

Empower the workforce, don’t bypass it: Your people need fluency, experimentation time, and psychological safety to adopt AI effectively. Bring cross-functional teams into the conversation: AI shouldn’t sit only with IT or innovation. It must involve legal, HR, operations, and the business SMEs.

Invest in safety and governance early: It will be harder and costlier to retrofit later.

Choose Implementation Partners strategically, not transactionally: Choose partners who operate as an extension of your leadership, not vendors who only want to bolt on AI tools.

Don’t rely entirely on frontier providers: Understand your dependencies. Build internal capability and understanding.

Plan for acceleration, not just automation: Month-long tasks becoming automated changes entire operating models, not just workflows.

Create a balanced portfolio: productivity + innovation + safety: Overshooting on any one dimension creates risk.

But don’t make AI safety and governance a reason to delay action. Start early and keep iterating.

In short:

AI transformation is not a tech initiative. It’s an organizational redesign initiative.

6. The geopolitical and societal responsibility

Another critical theme:

While companies compete for speed and dominance, AI governance is being shaped by only a handful of entities who own these frontier models.

This imbalance is dangerous.

In fact, Anthropic’s CEO Dario Amodei, recently said something that captures this tension perfectly: he’s “deeply uncomfortable” with the idea that a small group of tech CEOs are effectively determining the future of AI. And he’s right. We’ve reached a point where the same companies building frontier models are also self-governing their own risks.

We still lack a global governance model, a shared agreement on oversight, or even clarity on who should be empowered to make these decisions. If we want AI development to align with the public interest, we need far more than industry voices. We need educated, engaged political leadership, people who understand the stakes well enough to design transparent, democratic, and enforceable structures for accountability. This can’t be left to the private sector alone.

Global cooperation- between countries, regulators, researchers, and industry is required to prevent a scenario where competitive pressure overrides safety.

AI is too powerful to be governed solely by market forces.

We need:

Public trust

Transparent research

Ethical frameworks

Robust scientific understanding

Multi-country agreements

Corporate leadership that prioritizes safety, not just performance

The future of AI cannot be decided by a few frontier labs in closed rooms.

It must be shaped collectively.

Closing Reflection: What role do we play?

If there was one thread that tied the entire lecture series together, it was this:

The potential upside of AGI is extraordinary. We could dramatically advance healthcare, reduce poverty, expand knowledge, and free humanity from mundane work.

But the risks are equally immense.

Which leaves each of us, especially those in positions of influence, with a responsibility:

To build thoughtfully and ask better questions.

To design with humanity in mind.

To think beyond ourselves and beyond the quarter.

To shape AI as a force for uplift, not replacement.

My personal takeaway, and the question I’m carrying forward, is this:

What role are we each playing in shaping a future that benefits humanity, not just technology?

I don’t have the full answer yet.

But I know exploration matters, and I hope this space becomes one where we can think about it together.

Love #5! Always said “Change Management is as much about the people as it is about the tools” and that’s never been more true here.